Malaysia’s Greatest Crisis: Loss of National Pride and Unity

by Murray Hunter

Love him or hate him, Mahathir Mohamed during his first stint as prime minister was able to instill a great sense of national pride and unity.

Mahathir went on a massive infrastructure drive. Most Malaysians were proud of the Penang Bridge that finally linked the island with the mainland. The North-South Highway project changed the nature of commuting up and down the peninsula. Kuala Lumpur International Airport (KLIA) was built and the development of Putra Jaya gave the country a new seat of administration.

Mahathir’s fait accompli was the building of the KLCC towers in central Kuala Lumpur, which were the tallest in the world at the time. These buildings are now the country’s major icon. Langkawi became a must holiday place for Malaysians. He brought elite Formula One motor racing and built a special purpose circuit for the event. He promoted the Tour de Langkawi as a local version of the Tour de France. He spared no expense on building massive new sporting complexes at Bukit Jalil to host the Commonwealth Games in 1998.

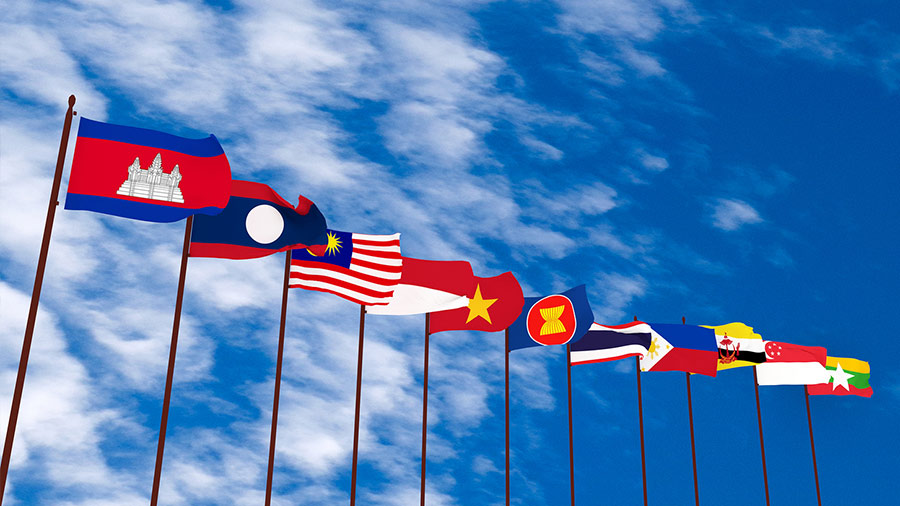

When the member nations of ASEAN abandoned the idea to build a regional car, Mahathir went alone, picking up old technology from Mitsubishi, creating the Proton Saga for better or worse although the national car project has been roundly criticized for losing hundreds of millions of dollars and costing more in terms of consumer lost opportunity.

Nonetheless, Malaysia became an Asian Tiger and Mahathir himself became an outspoken leader internationally. The country was proud of what it had achieved. He knew the value of national symbols. The slogan Malaysia Boleh (Malaysia Can) was often heard along with the waving of the Jalur Gemilang (stripes of glory – Malaysian Flag) at public displays of national pride and unity.

The Barisan Nasional was a working government coalition that symbolized national unity through the make-up of the cabinet and its true multi-ethnic flavor. Ministers like Samy Vellu from the Malaysian India Congress and Ling Liong Sik from the Malaysian Chinese Association had high public profiles.

Although Mahathir was labeled as an ultra-conservative Malay, he worked with anyone who could help him fulfil his vision. Businessmen like Vincent Tan, Robert Kuok, Lim Goh Tong, Ananda Krishnan, and Tony Fernandez all had very close relationships with Mahathir. Malaysia Inc. was more important to Mahathir than Malay supremacy.

That’s now 30 years ago. The prime casualty has been national pride and unity. The generally positive perception of the Mahathir era drastically changed when he abruptly sacked his deputy Anwar Ibrahim from office in 1998. The accusations and conviction of Anwar for sodomy polarized the population. The goodwill that Mahathir had built up over more than 25 years in public life was put into question.

Although it was his intention to eliminate his nemesis Anwar from politics, he made sodomy a household word in a conservative society, taking luster away from his legacy. He was painted by the Anwar propaganda machine and the alternative media as a tyrant with millions of dollars hidden away in foreign banks. In addition, two years of headlines and court reports about Anwar’s sodomy trial took away a sense of innocence, showing Malaysia’s ‘dark side’ with TV pictures showing a stained mattress being carted into and out of court every day on which Anwar was convicted of performing sodomy.

Under weak successors, belief in government further faltered. Respect for national leaders took another hit with Mahathir’s successor Ahmad Badawi painted as someone who slept on the job and enjoyed a luxurious lifestyle while many suffered economically. Badawi was painted by the PKR propaganda machine as corrupt. The dealings of his son-in-law and political adviser Khairy Jamaluddin were portrayed as corrupt nepotism.

Mahathir engineered an ungraceful exit for Badawi, replacing him with Najib Razak in 2009. The Najib premiership was tainted from the outset with rumors of murder and corruption. Najib’s wife Rosmah also became an object of ridicule, bringing respect for the institution of government to an all-time low.

However, it’s not just the corruption of politicians that destroyed respect for Malaysian institutions. The rakyat (people) have always wanted to believe in royalty. Even with stories about royal misdoings, there is no real talk of abolishing the monarchy. Whenever a member of one of the royal families acts in the interests of the rakyat, there has always been public praise and support. However, when members of a royal family act against the interests of the rakyat, the social media react.

Stories have been circulating for years about the misdeeds of Johor Royal Family. The current spat between Tunku Ismail, the Johor Crown Prince, commonly known as TMJ and Mahathir is extremely damaging for the royal institutions. Only the sedition act, a de facto lese-majeste law, is protecting the institution from much wider criticism.

Royal decorations and titles, VVIP service in government offices and special treatment for some citizens over others, shows a muddled Malaysia still clinging to the vestiges of feudalism. These artefacts are doing nothing to unite the country, a hangover from the old days of colonial class distinction.

However, the most powerful source of destruction for national pride and unity is the ketuanan Melayu (Malay Superiority) narrative which has become much more extreme. One of the basic assumptions is that bumiputeras — indigenous peoples – are the rightful owners of the land. From the point of view of the ketuanan proponents, land is not seen as a national symbol and non-Malays are excluded. This is a great barrier to developing any sense of national pride and unity.

The gulf between Malay and non-Malay has widened dramatically over the last two generations as Islam has grown into a major aspect of Malay identity. Citizens once celebrated their diverse ethnicities in harmony. Decrees made in the name of Islam now discourage this. No longer are Hari Raya, Chinese New Year, Deepavali and Christmas shared Malaysian experiences.

The way of life has become Islamized to the point where there is little place for other religions and traditions. Food, dress codes, entertainment, education, the civil service, government, police and the military are all Islamized.

Shared apprehensions about what Malaysia will be have caused the Chinese to close ranks. The influence of Ketuanan Melayu in government policy excludes non-Malay participation in many fields like education, civil service and the military, etc. The younger generation of Chinese today tend to see themselves as Chinese first and Malaysians second. Chinese schools promote language and a strong sense of Chinese culture over a Malaysian identity as a mass defence mechanism.

The New Economic Policy, put in place in 1969 after disastrous race riots as an affirmative action program for the majority Malays, has also done a disservice to those it was designed to help. The thesis of Mahathir’s book The Malay Dilemma was that Malays were basically lazy and needed help from the government is the faulty grounding assumption. The NEP is actually an attack on Malay self-esteem.

Rather than offering something spiritual, Islam has become a doctrine of conformity, where particular rights and rituals must legally be adhered to. Failure to do so in the case of not fasting during Ramadan can lead to punitive legal action. Any views outside narrow social norms lead to heavy criticism. Just recently the Islamic authorities (JAKIM) in Selangor started investigating a discussion forum on women’s choice about wearing the hijab. Not just freedom of discussion is stifled, but also the right to be creative.

Islam has buried the principles of Rukun Negara (national principles), the supposed guiding philosophy of the nation. Rukun Negara was once a symbol of national pride and unity but has almost totally been replaced by a Doa (or prayer) before public events. A sense of nation has been sacrificed for the Islamization of public gatherings.

Today we see much less flag-waving during the Merdeka season. There are more divisional narratives on all ethnic sides. There is disappointment with the political system. Islam is seen by many as something overpowering rather than emancipating. People feel they need to conform to be accepted in society.

National pride and unity are at their lowest ebb since independence, where after 30 years of education the younger generations of Malays see Islam as more important than nationalism. Chinese and Indians are apprehensive about what Malaysia is turning into. Even the Orang Asli – the original inhabitants of the peninsula before the arrival of ethnic Malays from Indonesia — and non-Muslim indigenous people of Sabah and Sarawak identify as second-class.

Malaysia has travelled far away from the aspirations of Tunku Abdul Rahman when the Jalur Gemilang was raised for the first time over a free Malaya in 1957. Malaysia’s economic prosperity is relatively declining in the region and the nation is increasingly strangled by the need to conform. Malaysia appears to be a ship without a rudder, its reform agenda locked away under the Official Secrets Act.

The possibility of racial violence festering once again cannot be overlooked. Divisive narratives are being pushed until one day an unknown tipping point could be reached. The strong sense of social conformity, the exclusion of a national sense of ownership to all, the current totalitarian nature of authority and ketuanan Melayu narratives are a very dangerous mix.

Murray Hunter is a regular Asia Sentinel contributor. He is a development specialist and a longtime resident of the region.